100 Days Of DevOps

Day 1 : Linux User Setup with Non-Interactive Shell

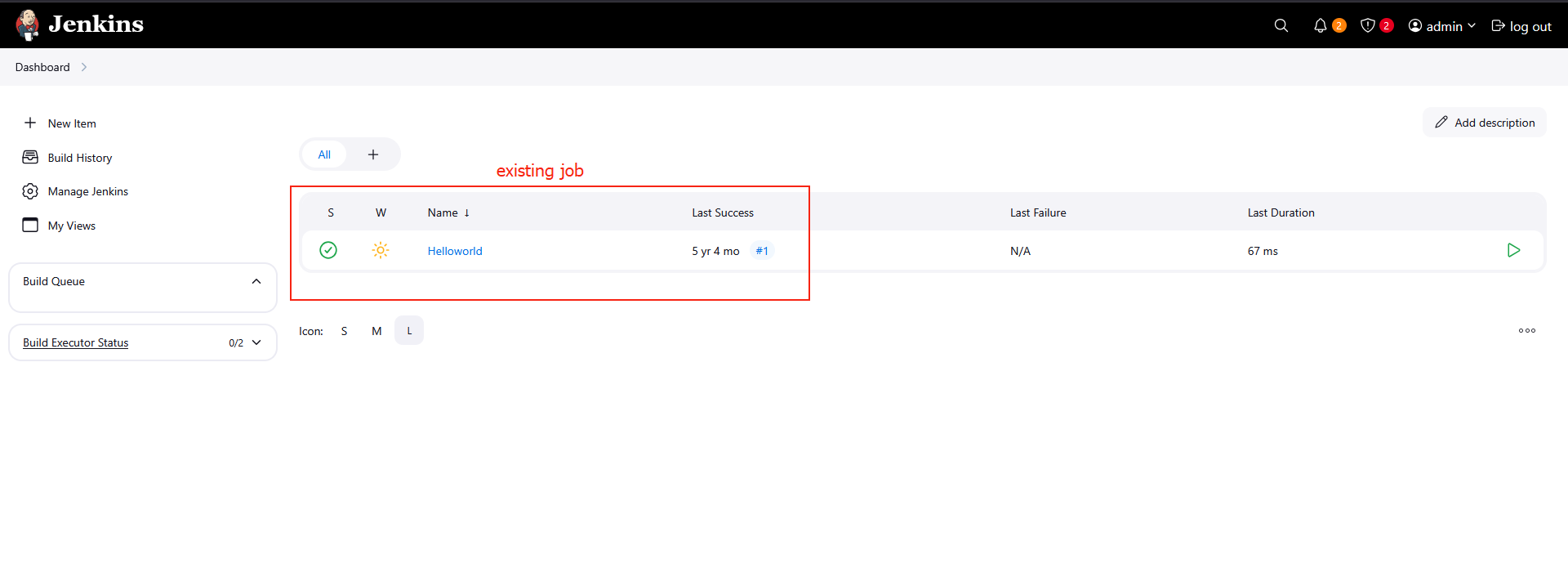

Creating non interactive shell and user setup

Create a user with non-interactive shell for your organization on a specific server. This is essential for service accounts and automated processes that don’t require interactive login capabilities.

1

sudo useradd -s /sbin/nologin kristy

Day 2: Temporary User Setup with Expiry

Temporary user setup with Expiry

1

2

3

sudo useradd -e 2026-12-24 kristy

sudo passwd kristy

Day 3: Secure Root SSH Access

Secure Root ssh access

1

sudo nano /etc/ssh/sshd_config

PermitRootLogin no- You have to do it for every single host!

Day 4:Script Execution Permissions

In a bid to automate backup processes, the xFusionCorp Industries sysadmin team has developed a new bash script named xfusioncorp.sh. While the script has been distributed to all necessary servers, it lacks executable permissions on App Server 1 within the Stratos Datacenter.

Your task is to grant executable permissions to the /tmp/xfusioncorp.sh script on App Server 1. Additionally, ensure that all users have the capability to execute it.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

thor@jumphost ~$ ssh tony@stapp01.stratos.xfusioncorp.com

The authenticity of host 'stapp01.stratos.xfusioncorp.com (172.17.0.4)' can't be established.

ED25519 key fingerprint is SHA256:8eDx2ZriNxW9+pNci7Zq6oECY1W13b28pRzv/AA3cxE.

[tony@stapp01 tmp]$ ls -la /tmp

total 36

drwxrwxrwt 1 root root 4096 Dec 12 08:44 .

drwxr-xr-x 1 root root 4096 Dec 12 08:45 ..

drwxrwxrwt 2 root root 4096 Dec 12 08:42 .ICE-unix

drwxrwxrwt 2 root root 4096 Dec 12 08:42 .X11-unix

drwxrwxrwt 2 root root 4096 Dec 12 08:42 .XIM-unix

drwxrwxrwt 2 root root 4096 Dec 12 08:42 .font-unix

drwx------ 3 root root 4096 Dec 12 08:42 systemd-private-1435520e8a0746a589dc0f038604b67c-dbus-broker.service-OQFfiu

drwx------ 3 root root 4096 Dec 12 08:42 systemd-private-1435520e8a0746a589dc0f038604b67c-systemd-logind.service-QuuNiN

-rwxr-xr-x 1 root root 40 Dec 12 08:42 xfusioncorp.sh

[tony@stapp01 tmp]$ ./xfusioncorp.sh

Welcome To KodeKloud

Day 5: SElinux Installation and Configuration

Following a security audit, the xFusionCorp Industries security team has opted to enhance application and server security with SELinux. To initiate testing, the following requirements have been established for App server 2 in the Stratos Datacenter:

Install the required

SELinuxpackages.Permanently disable SELinux for the time being; it will be re-enabled after necessary configuration changes.

No need to reboot the server, as a scheduled maintenance reboot is already planned for tonight.

Disregard the current status of SELinux via the command line; the final status after the reboot should be

disabled.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

[root@stapp02 ~] sudo yum install policycoreutils policycoreutils-python selinux-policy selinux-policy-targeted setroubleshoot-server

[root@stapp02 ~] sudo sed -i 's/^SELINUX=.*/SELINUX=disabled/' /etc/selinux/config

[root@stapp02 ~] vi /etc/selinux/config

[root@stapp02 ~] cat /etc/selinux/config

# This file controls the state of SELinux on the system.

# SELINUX= can take one of these three values:

# enforcing - SELinux security policy is enforced.

# permissive - SELinux prints warnings instead of enforcing.

# disabled - No SELinux policy is loaded.

# See also:

# https://access.redhat.com/documentation/en-us/red_hat_enterprise_linux/9/html/using_selinux/changing-selinux-states-and-modes_using-selinux#changing-selinux-modes-at-boot-time_changing-selinux-states-and-modes

#

# NOTE: Up to RHEL 8 release included, SELINUX=disabled would also

# fully disable SELinux during boot. If you need a system with SELinux

# fully disabled instead of SELinux running with no policy loaded, you

# need to pass selinux=0 to the kernel command line. You can use grubby

# to persistently set the bootloader to boot with selinux=0:

#

# grubby --update-kernel ALL --args selinux=0

#

# To revert back to SELinux enabled:

#

# grubby --update-kernel ALL --remove-args selinux

#

SELINUX=disabled

# SELINUXTYPE= can take one of these three values:

# targeted - Targeted processes are protected,

# mls - Multi Level Security protection.

SELINUXTYPE=targeted

Day 6: Create a Cron Job

The Nautilus system admins team has prepared scripts to automate several day-to-day tasks. They want them to be deployed on all app servers in Stratos DC on a set schedule. Before that they need to test similar functionality with a sample cron job. Therefore, perform the steps below:

a. Install cronie package on all Nautilus app servers and start crond service.

b. Add a cron */5 * * * * echo hello > /tmp/cron_text for root user.

- Login into each server using ssh (check day01)

- Install

croniepackage into centos:1

sudo yum install cronie -y

Start crond service

1

2

sudo systemctl enable crond

sudo systemctl start crond

Create cron schedule:

1

2

sudo crontab -e

*/5 * * * * echo hello > /tmp/cron_text

Verify crontab:

1

sudo crontab -l

and wait 5 minutes to check cron_text in /tmp/

Automation Script

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

#!/bin/sh

# setup_cron_job.sh

# Script to setup cron job on CentOS for Nautilus app servers

set -e # Exit on any error

echo "=== Setting up Cron Job on CentOS ==="

# Step 1: Install cronie package

echo "Installing cronie package..."

if ! rpm -q cronie &>/dev/null; then

sudo yum install cronie -y

echo "✓ cronie package installed successfully"

else

echo "✓ cronie package already installed"

fi

# Step 2: Start and enable crond service

echo "Starting and enabling crond service..."

sudo systemctl start crond

sudo systemctl enable crond

# Verify service is running

if systemctl is-active --quiet crond; then

echo "✓ crond service is running"

else

echo "✗ Failed to start crond service"

exit 1

fi

# Step 3: Add cron job for root user

echo "Adding cron job for root user..."

# Define the cron job

CRON_JOB="*/5 * * * * echo hello > /tmp/cron_text"

# Check if cron job already exists

if sudo crontab -l 2>/dev/null | grep -q "echo hello > /tmp/cron_text"; then

echo "✓ Cron job already exists"

else

# Add the cron job

(sudo crontab -l 2>/dev/null || true; echo "$CRON_JOB") | sudo crontab -

echo "✓ Cron job added successfully"

fi

# Step 4: Verify the setup

echo "Verifying cron job setup..."

echo "Current cron jobs for root user:"

sudo crontab -l

echo ""

echo "=== Setup Complete ==="

echo "The cron job will run every 5 minutes and write 'hello' to /tmp/cron_text"

echo "To monitor: sudo tail -f /var/log/cron"

echo "To check output: cat /tmp/cron_text (after 5+ minutes)"

# Optional: Show service status

echo ""

echo "Crond service status:"

sudo systemctl status crond --no-pager -l

Day 7: Linux SSH Authentication

Linux SSH Authentication

The system admins team of xFusionCorp Industries has set up some scripts on jump host that run on regular intervals and perform operations on all app servers in Stratos Datacenter. To make these scripts work properly we need to make sure the thor user on jump host has password-less SSH access to all app servers through their respective sudo users (i.e tony for app server 1). Based on the requirements, perform the following: Set up a password-less authentication from user thor on jump host to all app servers through their respective sudo users.

Login to jump host as thor

1

ssh thor@jump_host

Generate SSH key (press Enter for all prompts)

1

ssh-keygen -t rsa -b 2048

Copy key to respective sudo users on app servers

1

2

3

ssh-copy-id tony@stapp01

ssh-copy-id steve@stapp02

ssh-copy-id banner@stapp03

Verify password-less access

1

2

3

ssh tony@stapp01.stratos.xfusioncorp.com

ssh steve@stapp02.stratos.xfusioncorp.com

ssh banner@stapp03.stratos.xfusioncorp.com

Day 8: Install Ansible

During the weekly meeting, the Nautilus DevOps team discussed about the automation and configuration management solutions that they want to implement. While considering several options, the team has decided to go with Ansible for now due to its simple setup and minimal pre-requisites. The team wanted to start testing using Ansible, so they have decided to use jump host as an Ansible controller to test different kind of tasks on rest of the servers.

Install ansible version 4.7.0 on Jump host using pip3 only. Make sure Ansible binary is available globally on this system, i.e all users on this system are able to run Ansible commands.

Check pip3 version

1

pip3 --version

Install pip3 (if not already installed)

1

sudo yum install -y python3-pip

Install Ansible 4.7.0 globally using pip3

1

sudo pip3 install ansible==4.7.0

Verify Ansible version

1

ansible --version

Check Ansible binary location

1

which ansible

Verify PATH includes Ansible binary directory

1

echo $PATH

Run Ansible (basic command check)

1

ansible

Day 9: MariaDB Troubleshooting

There is a critical issue going on with the Nautilus application in Stratos DC. The production support team identified that the application is unable to connect to the database. After digging into the issue, the team found that mariadb service is down on the database server.

Look into the issue and fix the same.

Check OS Information (Verify Environment)

1

2

3

cat /etc/release

cat /etc/releases

cat /etc/os-release

Check MariaDB Service Status

Confirms whether MariaDB is running, stopped, or failed.

1

2

sudo systemctl status mariadb

sudo systemctl status mariadb.service

Attempt to Start MariaDB

Initial attempt to bring the database service online.

1

sudo systemctl start mariadb

Check MariaDB Error Logs (Root Cause Analysis)

Used when the service fails to start. Shows InnoDB and permission errors.

1

sudo tail /var/log/mariadb/mariadb.log

Fix MariaDB Data Directory Ownership

MariaDB runs as mysql user and must own its data directory.

1

sudo chown -R mysql:mysql /var/lib/mysql

Fix Data Directory Permissions

Ensures MariaDB can read/write database files.

1

sudo chmod 755 /var/lib/mysql

Create MySQL Socket Directory

MariaDB needs this directory to create its socket file.

1

sudo mkdir -p /var/run/mysqld

Set Correct Ownership for Socket Directory

Allows MariaDB to bind to the socket.

1

sudo chown mysql:mysql /var/run/mysqld

Restart MariaDB After Fix

Applies permission changes and restarts the service.

1

sudo systemctl restart mariadb

Verify MariaDB Is Running

Final confirmation that the issue is resolved.

1

sudo systemctl status mariadb.service

Day 10: Linux Bash Scripts

The production support team of xFusionCorp Industries is working on developing some bash scripts to automate different day to day tasks. One is to create a bash script for taking websites backup. They have a static website running on App Server 3 in Stratos Datacenter, and they need to create a bash script named news_backup.sh which should accomplish the following tasks. (Also remember to place the script under /scripts directory on App Server 3).

a. Create a zip archive named xfusioncorp_news.zip of /var/www/html/news directory.

b. Save the archive in /backup/ on App Server 3. This is a temporary storage, as backups from this location will be clean on weekly basis. Therefore, we also need to save this backup archive on Nautilus Backup Server.

c. Copy the created archive to Nautilus Backup Server server in /backup/ location.

d. Please make sure script won’t ask for password while copying the archive file. Additionally, the respective server user (for example, tony in case of App Server 1) must be able to run it.

e. Do not use sudo inside the script. Note:

The zip package must be installed on given App Server before executing the script. This package is essential for creating the zip archive of the website files. Install it manually outside the script.

Here is a clean, concise cheat sheet created from your command history.

It’s written so you can revise quickly or paste into notes.

1. Basic Navigation & Checks

1

2

3

4

5

6

ls

whoami

cd ..

ls

cd scripts/

ls -la

2. Generate SSH Key (Passwordless SCP)

1

ssh-keygen -t rsa -b 2048

SSH keys are stored in:

1

2

cd /home/banner/.ssh/

ls

3. Copy SSH Key to Nautilus Backup Server

1

ssh-copy-id clint@stbkp01.stratos.xfusioncorp.com

Verify passwordless login:

1

ssh clint@stbkp01.stratos.xfusioncorp.com

4. Create Backup Script

Navigate to scripts directory:

1

2

cd ../../../scripts/

ls

Create and edit script:

1

vi beta_backup.sh

Make script executable:

1

chmod +x beta_backup.sh

5. Install Required Package (Outside Script)

1

sudo yum install zip

sudo is not used inside the script, only during setup.

Day 11: Install and Configure Tomcat Server

Install and Setup Tomcat Server

The Nautilus application development team recently finished the beta version of one of their Java-based applications, which they are planning to deploy on one of the app servers in Stratos DC. After an internal team meeting, they have decided to use the tomcat application server. Based on the requirements mentioned below complete the task:

- Install tomcat server on

App Server 1. - Configure it to run on port

3001. - There is a

ROOT.warfile on Jump host at location/tmp.

Deploy it on this tomcat server and make sure the webpage works directly on base URL i.e curl http://stapp01:3001

1. Install Tomcat on App Server 1 (stapp01)

Login to App Server 1:

1

ssh tony@stapp01

Install Tomcat:

1

sudo yum install -y tomcat

2. Configure Tomcat to Run on Port 3001

Edit Tomcat server configuration:

1

sudo vi /etc/tomcat/server.xml

Find the Connector section (default port 8080):

1

2

3

<Connector port="8080" protocol="org.apache.coyote.http11.Http11NioProtocol"

connectionTimeout="20000"

redirectPort="8443" />

Change 8080 → 3001:

1

2

3

<Connector port="3001" protocol="org.apache.coyote.http11.Http11NioProtocol"

connectionTimeout="20000"

redirectPort="8443" />

Save and exit.

3. Copy ROOT.war from Jump Host to App Server 1

Exit to Jump Host (if needed):

1

exit

Copy the WAR file:

1

scp /tmp/ROOT.war tony@stapp01:/tmp/

Login back to App Server 1:

1

ssh tony@stapp01

Move WAR file to Tomcat deployment directory:

1

sudo mv /tmp/ROOT.war /usr/share/tomcat/webapps/

Important:

Deploying asROOT.warensures the app runs on the base URL.

4. Start and Enable Tomcat

1

2

sudo systemctl start tomcat

sudo systemctl enable tomcat

Verify Tomcat is listening on port 3001:

1

sudo netstat -tulnp | grep 3001

5. Verify Application Deployment

Test from App Server 1:

1

curl http://localhost:3001

Or from Jump Host:

1

curl http://stapp01:3001

If the webpage content loads, the deployment is successful.

Day 12: Linux Network Services

Our monitoring tool has reported an issue in Stratos Datacenter. One of our app servers has an issue, as its Apache service is not reachable on port 3004 (which is the Apache port). The service itself could be down, the firewall could be at fault, or something else could be causing the issue.

Use tools like telnet, netstat, etc. to find and fix the issue. Also make sure Apache is reachable from the jump host without compromising any security settings.

Once fixed, you can test the same using command curl http://stapp01:3004 command from jump host.

Note: Please do not try to alter the existing index.html code, as it will lead to task failure.

1. Verify issue from jump host

1

2

curl http://stapp01:3004

telnet stapp01 3004

Purpose:

Confirms whether the service is reachable externally

Identifies network vs service-level issues

Then

ssh tony@stapp012. Check Apache service status

1

sudo systemctl status httpd

If stopped:

1

2

sudo systemctl start httpd

sudo systemctl enable httpd

3. Identify what is using port 3004

1

2

3

4

5

6

7

8

9

sudo netstat -tulnp | grep 3004

Active Internet connections (only servers)

Proto Recv-Q Send-Q Local Address Foreign Address State PID/Program name

tcp 0 0 127.0.0.11:36025 0.0.0.0:* LISTEN -

tcp 0 0 127.0.0.1:3004 0.0.0.0:* LISTEN 430/sendmail: accep

tcp 0 0 0.0.0.0:22 0.0.0.0:* LISTEN 298/sshd

tcp6 0 0 :::22 :::* LISTEN 298/sshd

udp 0 0 127.0.0.11:56145 0.0.0.0:* -

or

1

sudo ss -tulnp | grep 3004

Sendmail is running on port 3004

4. Resolve port conflict (sendmail case)

1

2

sudo systemctl stop sendmail

sudo systemctl disable sendmail

Verify port is free:

1

sudo netstat -tulnp | grep 3004

5. Start Apache after freeing the port

1

2

sudo systemctl start httpd

sudo systemctl enable httpd

Verify Apache is listening:

1

sudo netstat -tulnp | grep 3004

Expected:

1

0.0.0.0:3004 LISTEN httpd

6. Local validation on app server

1

curl http://localhost:3004

7. Check firewall service availability

1

sudo systemctl status firewalld

If firewalld is not installed, proceed to iptables checks.

8. Inspect iptables rules

1

sudo iptables -L -n

Key things to check:

INPUT chain policy

REJECT or DROP rules

Explicit allow rules for required ports

9. Allow Apache port via iptables (if needed)

1

sudo iptables -I INPUT 4 -p tcp --dport 3004 -j ACCEPT

This inserts the rule before the final REJECT rule.

10. Final external test (pass condition)

From jump host:

1

curl http://stapp01:3004

Day 13: IPtables Installation And Configuration

IPtables Installation And Configuration

We have one of our websites up and running on our Nautilus infrastructure in Stratos DC. Our security team has raised a concern that right now Apache’s port i.e 5000 is open for all since there is no firewall installed on these hosts. So we have decided to add some security layer for these hosts and after discussions and recommendations we have come up with the following requirements:

- Install

iptablesand all its dependencies on each app host. - Block incoming port

5000on all apps for everyone except forLBRhost. - Make sure the rules remain, even after system reboot.

You have to jump to every application server and run this bash script there

Step 1:

1

vi configure_firewall.sh

Bash Script

1

2

3

4

5

6

7

8

9

10

11

#!/bin/bash

LBR_IP="172.16.238.14"

APP_PORT="5000"

sudo yum install -y iptables iptables-services

sudo iptables -F

sudo iptables -A INPUT -p tcp --dport ${APP_PORT} -s ${LBR_IP} -j ACCEPT

sudo iptables -A INPUT -p tcp --dport ${APP_PORT} -j REJECT

sudo service iptables save

sudo iptables -L -n --line-numbers

Step 2:

1

chmod +x configure_firewall.sh

Step 3:

1

sudo ./configure_firewall.sh

Day 14: Linux Process Troubleshooting

Linux Process Troubleshooting

The production support team of xFusionCorp Industries has deployed some of the latest monitoring tools to keep an eye on every service, application, etc. running on the systems. One of the monitoring systems reported about Apache service unavailability on one of the app servers in Stratos DC.

Identify the faulty app host and fix the issue. Make sure Apache service is up and running on all app hosts. They might not have hosted any code yet on these servers, so you don’t need to worry if Apache isn’t serving any pages. Just make sure the service is up and running. Also, make sure Apache is running on port 6100 on all app servers.

Check Apache service status

1

sudo systemctl status httpd

If stopped:

1

2

sudo systemctl start httpd

sudo systemctl enable httpd

Identify what is using port 6100

1

sudo netstat -tulnp | grep 6100

Example output:

1

2

3

Active Internet connections (only servers)

Proto Recv-Q Send-Q Local Address Foreign Address State PID/Program name

tcp 0 0 127.0.0.1:6100 0.0.0.0:* LISTEN 430/sendmail: accep

or

1

sudo ss -tulnp | grep 6100

Sendmail is running on port 6100.

Resolve port conflict (sendmail case)

1

2

sudo systemctl stop sendmail

sudo systemctl disable sendmail

Verify port is free:

1

sudo netstat -tulnp | grep 6100

(No output means the port is free.)

Start Apache after freeing the port

1

2

sudo systemctl start httpd

sudo systemctl enable httpd

Verify Apache is listening:

1

sudo netstat -tulnp | grep 6100

Expected:

1

0.0.0.0:6100 LISTEN httpd

Local validation on app server

1

curl http://localhost:6100

Day 15: Setup SSL for Nginx

The system admins team of xFusionCorp Industries needs to deploy a new application on App Server 2 in Stratos Datacenter. They have some pre-requites to get ready that server for application deployment. Prepare the server as per requirements shared below:

- Install and configure

nginxonApp Server 2. - On

App Server 2there is a self signed SSL certificate and key present at location/tmp/nautilus.crtand/tmp/nautilus.key. Move them to some appropriate location and deploy the same in Nginx. - Create an

index.htmlfile with contentWelcome!under Nginx document root. - For final testing try to access the

App Server 2link (either hostname or IP) fromjump hostusing curl command. For examplecurl -Ik https://<app-server-ip>/.

Install & Enable Nginx

1

2

3

4

sudo yum install -y nginx

sudo systemctl start nginx

sudo systemctl enable nginx

systemctl status nginx

Prepare SSL Directory

1

sudo mkdir -p /etc/nginx/ssl

Move SSL Certificate & Key

1

2

sudo mv /tmp/nautilus.crt /etc/nginx/ssl/

sudo mv /tmp/nautilus.key /etc/nginx/ssl/

Set Secure Permissions

1

2

sudo chmod 600 /etc/nginx/ssl/nautilus.key

sudo chmod 644 /etc/nginx/ssl/nautilus.crt

Configure Nginx for HTTPS

1

sudo vi /etc/nginx/nginx.conf

Key SSL directives:

1

2

ssl_certificate /etc/nginx/ssl/nautilus.crt;

ssl_certificate_key /etc/nginx/ssl/nautilus.key;

Create Application Page

1

sudo vi /usr/share/nginx/html/index.html

Content:

1

Welcome!

(If needed, remove and recreate)

1

2

sudo rm /usr/share/nginx/html/index.html

sudo vi /usr/share/nginx/html/index.html

Validate & Reload Nginx

1

2

sudo nginx -t

sudo systemctl reload nginx

Test HTTPS from Jump Host

Check headers (SSL + HTTP/2)

1

curl -Ik https://<app-server-ip>/

Check page content

1

curl -k https://<app-server-ip>/

Expected Output:

1

Welcome!

Day 16: Install and Configure Nginx as an LBR

Day by day traffic is increasing on one of the websites managed by the Nautilus production support team. Therefore, the team has observed a degradation in website performance. Following discussions about this issue, the team has decided to deploy this application on a high availability stack i.e on Nautilus infra in Stratos DC. They started the migration last month and it is almost done, as only the LBR server configuration is pending. Configure LBR server as per the information given below:

- Install

nginxonLBRserver - Configure load-balancing with the an http context making use of all App Servers. Ensure that you update only the main

Nginxconfiguration file located at/etc/nginx/nginx.conf - Make sure you do not update the apache port that is already defined in the apache configuration on all app servers, also make sure apache server is up and running on all app servers

- Once done, you can access the website using StaticApp button on the top bar

1. Verify Apache (httpd) Service on App Servers

Login to each app server and ensure the Apache service is running and listening on the correct port.

1

sudo ss -tlnup

Sample Output

1

2

3

Netid State Recv-Q Send-Q Local Address:Port Peer Address:Port Process

tcp LISTEN 0 511 0.0.0.0:3000 0.0.0.0:* users:(("httpd",pid=1690,fd=3))

tcp LISTEN 0 128 0.0.0.0:22 0.0.0.0:* users:(("sshd",pid=1102,fd=3))

Apache is running on port: 3000

2. Install and Start NGINX on Load Balancer Server

Login to the LBR server and install NGINX.

1

2

3

sudo yum install nginx -y

sudo systemctl enable nginx

sudo systemctl start nginx

3. Configure NGINX Load Balancer

Edit the NGINX configuration file:

1

sudo vi /etc/nginx/nginx.conf

3.1 Add Upstream Backend Servers

Inside the http block (before the server block), add:

1

2

3

4

5

upstream stapp {

server stapp01:3000;

server stapp02:3000;

server stapp03:3000;

}

3.2 Configure Proxy Pass

Inside the server { listen 80; } block:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

location / {

proxy_pass http://stapp;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

proxy_http_version 1.1;

proxy_set_header Upgrade $http_upgrade;

proxy_set_header Connection "upgrade";

proxy_connect_timeout 5s;

proxy_read_timeout 60s;

}

3.3 Validate and Restart NGINX

1

2

sudo nginx -t

sudo systemctl restart nginx

4. Full NGINX Load Balancer Configuration

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

user nginx;

worker_processes auto;

error_log /var/log/nginx/error.log;

pid /run/nginx.pid;

include /usr/share/nginx/modules/*.conf;

events {

worker_connections 1024;

}

http {

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

access_log /var/log/nginx/access.log main;

sendfile on;

tcp_nopush on;

tcp_nodelay on;

keepalive_timeout 65;

types_hash_max_size 4096;

include /etc/nginx/mime.types;

default_type application/octet-stream;

include /etc/nginx/conf.d/*.conf;

upstream stapp {

server stapp01:3000;

server stapp02:3000;

server stapp03:3000;

}

server {

listen 80;

listen [::]:80;

server_name _;

include /etc/nginx/default.d/*.conf;

error_page 404 /404.html;

location = /404.html {}

error_page 500 502 503 504 /50x.html;

location = /50x.html {}

location / {

proxy_pass http://stapp;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

proxy_http_version 1.1;

proxy_set_header Upgrade $http_upgrade;

proxy_set_header Connection "upgrade";

proxy_connect_timeout 5s;

proxy_read_timeout 60s;

}

}

}

Day 17: Install and Configure PostgreSQL

The Nautilus application development team has shared that they are planning to deploy one newly developed application on Nautilus infra in Stratos DC. The application uses PostgreSQL database, so as a pre-requisite we need to set up PostgreSQL database server as per requirements shared below:

PostgreSQL database server is already installed on the Nautilus database server.

- Create a database user

kodekloud_timand set its password toLQfKeWWxWD. - Create a database

kodekloud_db2and grant full permissions to userkodekloud_timon this database.

Please do not try to restart PostgreSQL server service.

1. Verify psql Binary Location

1

which psql

Output:

1

/usr/bin/psql

2. Switch to PostgreSQL Superuser

Login as the postgres user using sudo:

1

sudo -u postgres psql

Note:

The warning below is normal and can be ignored:

1

could not change directory to "/home/peter": Permission denied

3. Create a New PostgreSQL User

1

2

CREATE USER kodekloud_tim

WITH ENCRYPTED PASSWORD 'LQfKeWWxWD';

User created successfully.

4. Create a New Database

1

CREATE DATABASE kodekloud_db2;

Database created.

5. Grant Privileges on Database to User

1

2

3

GRANT ALL PRIVILEGES

ON DATABASE kodekloud_db2

TO kodekloud_tim;

Permissions granted.

6. Verify Users and Databases (Optional)

1

2

\du -- list users

\l -- list databases

Day 18: Configure LAMP server

xFusionCorp Industries is planning to host a WordPress website on their infra in Stratos Datacenter. They have already done infrastructure configuration—for example, on the storage server they already have a shared directory /vaw/www/html that is mounted on each app host under /var/www/html directory. Please perform the following steps to accomplish the task:

a. Install httpd, php and its dependencies on all app hosts.

b. Apache should serve on port 5004 within the apps.

c. Install/Configure MariaDB server on DB Server.

d. Create a database named kodekloud_db10 and create a database user named kodekloud_roy identified as password B4zNgHA7Ya. Further make sure this newly created user is able to perform all operation on the database you created.

e. Finally you should be able to access the website on LBR link, by clicking on the App button on the top bar. You should see a message like App is able to connect to the database using user kodekloud_roy

Install Apache & PHP

1

sudo yum install -y httpd php php-mysqli

Start & Enable Apache

1

2

sudo systemctl start httpd

sudo systemctl enable httpd

Verify PHP Installation

1

php -v

Change Apache Port to 5004

1

sudo sed -i 's/^Listen .*/Listen 5004/' /etc/httpd/conf/httpd.conf

Restart Apache

1

sudo systemctl restart httpd

Verify Apache is Listening on 5004

1

sudo ss -tulnp | grep httpd

Optional: Bash Script (App Server Automation)

1

2

3

4

5

6

7

8

9

10

#!/bin/bash

set -e

sudo yum install -y httpd php php-mysqli

sudo systemctl start httpd

sudo systemctl enable httpd

sudo sed -i 's/^Listen .*/Listen 5004/' /etc/httpd/conf/httpd.conf

sudo systemctl restart httpd

php -v

sudo ss -tulnp | grep httpd

Usage:

1

2

3

vi setup_apache_5004.sh

chmod +x setup_apache_5004.sh

./setup_apache_5004.sh

DATABASE SERVER SETUP

Host: stdb01.stratos.xfusioncorp.com

SSH to DB Server

1

ssh peter@stdb01.stratos.xfusioncorp.com

Install MariaDB Server

1

sudo yum install -y mariadb-server

###Start & Enable MariaDB

1

2

sudo systemctl start mariadb

sudo systemctl enable mariadb

Secure MariaDB

1

sudo mysql_secure_installation

Set root password, remove anonymous users, disallow remote root login, remove test DB.

Login to MySQL

1

mysql -u root -p

Create Database

1

CREATE DATABASE kodekloud_db10;

Create Database User

1

CREATE USER 'kodekloud_roy'@'%' IDENTIFIED BY 'B4zNgHA7Ya';

Grant Privileges

1

2

GRANT ALL PRIVILEGES ON kodekloud_db10.* TO 'kodekloud_roy'@'%';

FLUSH PRIVILEGES;

Verify Grants

1

SHOW GRANTS FOR 'kodekloud_roy'@'%';

Day 19: Install and Configure Web Application

xFusionCorp Industries is planning to host two static websites on their infra in Stratos Datacenter. The development of these websites is still in-progress, but we want to get the servers ready. Please perform the following steps to accomplish the task:

a. Install httpd package and dependencies on app server 1.

b. Apache should serve on port 5000.

c. There are two website’s backups /home/thor/news and /home/thor/demo on jump_host. Set them up on Apache in a way that news should work on the link http://localhost:5000/news/ and demo should work on link http://localhost:5000/demo/ on the mentioned app server.

d. Once configured you should be able to access the website using curl command on the respective app server, i.e curl http://localhost:5000/news/ and curl http://localhost:5000/demo/

Install Apache (httpd)

1

2

3

sudo yum install -y httpd

sudo systemctl enable httpd

sudo systemctl start httpd

Configure Apache to Listen on Port 5000

Edit Apache configuration:

1

sudo vi /etc/httpd/conf/httpd.conf

Update the listening port:

1

Listen 5000

Restart Apache:

1

sudo systemctl restart httpd

Copy Website Data from Jump Host (thor)

Direct copying to /var/www/html is not permitted, so data is first copied to /tmp.

1

2

scp -r /home/thor/news tony@stapp01.stratos.xfusioncorp.com:/tmp/

scp -r /home/thor/demo tony@stapp01.stratos.xfusioncorp.com:/tmp/

Move Website Data to Apache Document Root

Login to the app server and move the files using sudo:

1

2

3

ssh tony@stapp01.stratos.xfusioncorp.com

sudo mv /tmp/news /var/www/html/

sudo mv /tmp/demo /var/www/html/

Set Correct Ownership and Permissions

1

2

sudo chown -R apache:apache /var/www/html/news /var/www/html/demo

sudo chmod -R 755 /var/www/html/news /var/www/html/demo

Verify:

1

ls -ld /var/www/html/news /var/www/html/demo

Verify Website Access Using curl

1

2

curl http://localhost:5000/news/

curl http://localhost:5000/demo/

Here is your Markdown cheat sheet, strictly following the same format and style you shared 👇 (Headings, steps, code blocks — all aligned)

Day 20: Configure Nginx + PHP-FPM Using Unix Sock

The Nautilus application development team is planning to launch a new PHP-based application, which they want to deploy on Nautilus infra in Stratos DC. The development team had a meeting with the production support team and they have shared some requirements regarding the infrastructure. Below are the requirements they shared:

a. Install nginx on app server 1 , configure it to use port 8097 and its document root should be /var/www/html. b. Install php-fpm version 8.3 on app server 1, it must use the unix socket /var/run/php-fpm/default.sock (create the parent directories if don’t exist). c. Configure php-fpm and nginx to work together. d. Once configured correctly, you can test the website using curl http://stapp01:8097/index.php command from jump host.

NOTE: We have copied two files, index.php and info.php, under /var/www/html as part of the PHP-based application setup. Please do not modify these files.

Install NGINX

1

2

3

sudo yum install -y nginx

sudo systemctl enable nginx

sudo systemctl start nginx

Configure NGINX to Listen on Port 8097

Edit nginx configuration:

1

sudo vi /etc/nginx/nginx.conf

Add or update the server block inside http {}:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

server {

listen 8097;

listen [::]:8097;

server_name _;

root /var/www/html;

# Load configuration files for the default server block.

# include /etc/nginx/default.d/*.conf;

location ~ \.php$ {

try_files $uri =404;

fastcgi_pass unix:/var/run/php-fpm/default.sock;

fastcgi_index index.php;

fastcgi_param SCRIPT_FILENAME $document_root$fastcgi_script_name;

include fastcgi_params;

}

error_page 404 /404.html;

location = /404.html {

}

error_page 500 502 503 504 /50x.html;

location = /50x.html {

}

}

Restart nginx:

1

sudo systemctl restart nginx

Install PHP-FPM 8.3

Install PHP and PHP-FPM:

1

sudo dnf module install php:8.3 -y

Configure PHP-FPM Socket

Create required directory:

1

sudo mkdir -p /var/run/php-fpm

Edit PHP-FPM pool configuration:

1

sudo vi /etc/php-fpm.d/www.conf

Update the following values:

1

listen = /var/run/php-fpm/default.sock

Set Correct Ownership and Permissions

1

sudo chown -R nginx:nginx /var/www/html

Start and Enable Services

1

2

3

4

5

sudo systemctl start php-fpm

sudo systemctl enable php-fpm

sudo systemctl start nginx

sudo systemctl enable nginx

Verify service status:

1

2

systemctl status nginx

systemctl status php-fpm

Verify Application Using curl (from Jump Host)

1

curl http://stapp01:8097/index.php

(Optional test)

1

curl http://stapp01:8097/info.php

You can check How to Configure PHP-FPM with NGINX for Secure PHP Processing

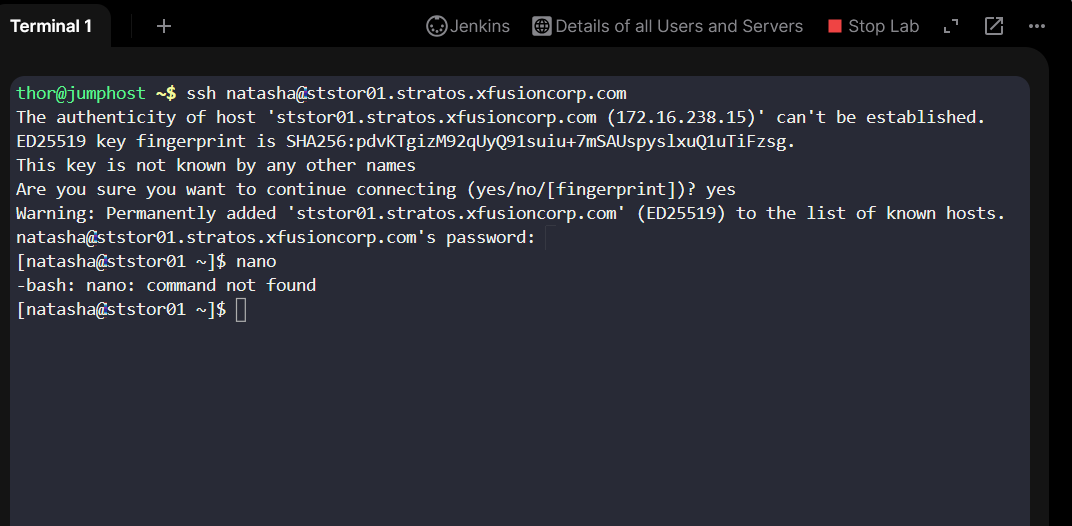

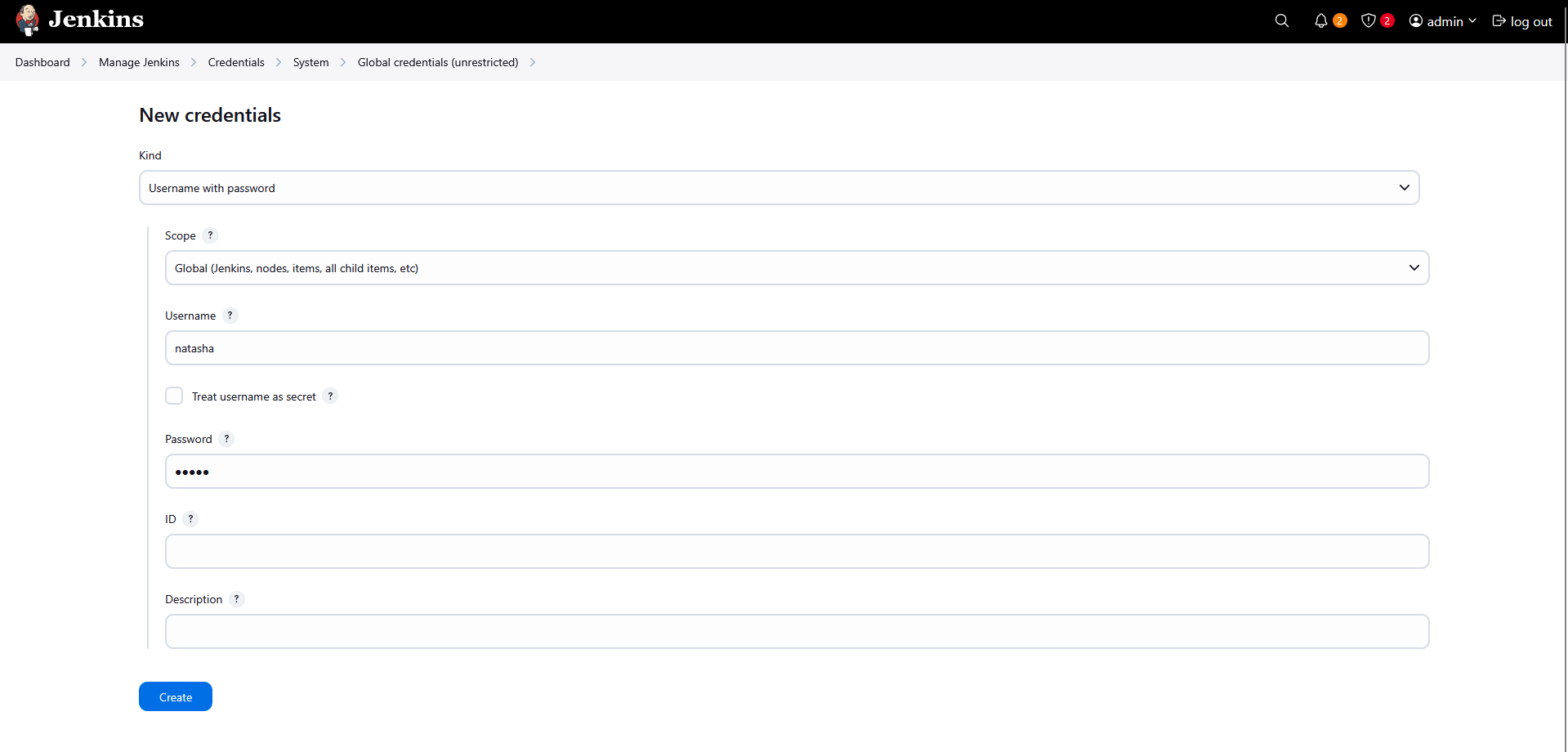

Day 21: Set Up Git Repository on Storage Server

The Nautilus development team has provided requirements to the DevOps team for a new application development project, specifically requesting the establishment of a Git repository. Follow the instructions below to create the Git repository on the Storage server in the Stratos DC:

Utilize

yumto install thegitpackage on theStorage Server.Create a bare repository named

/opt/media.git(ensure exact name usage).

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

[natasha@ststor01 ~]$ sudo yum install git

[natasha@ststor01 ~]$ git -h | grep bare

[--no-optional-locks] [--no-advice] [--bare] [--git-dir=<path>]

[natasha@ststor01 ~]$ git init --bare /opt/media.git

fatal: cannot mkdir /opt/media.git: Permission denied

[natasha@ststor01 ~]$ sudo git init --bare /opt/media.git

hint: Using 'master' as the name for the initial branch. This default branch name

hint: is subject to change. To configure the initial branch name to use in all

hint: of your new repositories, which will suppress this warning, call:

hint:

hint: git config --global init.defaultBranch <name>

hint:

hint: Names commonly chosen instead of 'master' are 'main', 'trunk' and

hint: 'development'. The just-created branch can be renamed via this command:

hint:

hint: git branch -m <name>

Initialized empty Git repository in /opt/media.git/

Day 22: Clone Git Repository on Storage Server

The DevOps team established a new Git repository last week, which remains unused at present. However, the Nautilus application development team now requires a copy of this repository on the Storage Server in the Stratos DC. Follow the provided details to clone the repository:

The repository to be cloned is located at

/opt/cluster.gitClone this Git repository to the

/usr/src/kodekloudreposdirectory. Perform this task using the natasha user, and ensure that no modifications are made to the repository or existing directories, such as changing permissions or making unauthorized alterations.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

thor@jumphost /opt$ ssh natasha@ststor01.stratos.xfusioncorp.com

[natasha@ststor01 ~]$ ls

[natasha@ststor01 ~]$ ls -ld /usr/src/kodekloudrepos

drwxr-xr-x 2 natasha natasha 4096 Dec 29 15:55 /usr/src/kodekloudrepos

[natasha@ststor01 ~]$ git clone /opt/cluster.git/ /usr/src/kodekloudrepos/cluster

Cloning into '/usr/src/kodekloudrepos/cluster'...

warning: You appear to have cloned an empty repository.

done.

[natasha@ststor01 ~]$ cd /usr/src/kodekloudrepos/cluster

[natasha@ststor01 cluster]$ git status

On branch master

No commits yet

nothing to commit (create/copy files and use "git add" to track)

[natasha@ststor01 cluster]$

Day 23: Fork a Git Repository

There is a Git server utilized by the Nautilus project teams. Recently, a new developer named Jon joined the team and needs to begin working on a project. To begin, he must fork an existing Git repository. Follow the steps below:

- Click on the

Gitea UIbutton located on the top bar to access the Gitea page. - Login to

Giteaserver using usernamejonand passwordJon_pass123. - Once logged in, locate the Git repository named

sarah/story-blogandforkit under thejonuser.

Note: For tasks requiring web UI changes, screenshots are necessary for review purposes. Additionally, consider utilizing screen recording software such as loom.com to record and share your task completion process.

Just go to the git repo and fork it to your account

Day 24: Git Create Branches

Nautilus developers are actively working on one of the project repositories, /usr/src/kodekloudrepos/beta. Recently, they decided to implement some new features in the application, and they want to maintain those new changes in a separate branch. Below are the requirements that have been shared with the DevOps team:

On Storage server in Stratos DC create a new branch

xfusioncorp_betafrom master branch in/usr/src/kodekloudrepos/betagit repo.Please do not try to make any changes in the code.

1

2

3

4

5

6

7

8

cd /usr/src/kodekloudrepos/beta/

ls

git branch

sudo su

git branch

git switch master

git branch xfusioncorp_beta

git branch

Day 25: Git Merge Branches

The Nautilus application development team has been working on a project repository /opt/cluster.git. This repo is cloned at /usr/src/kodekloudrepos on storage server in Stratos DC. They recently shared the following requirements with DevOps team:

Create a new branch nautilus in /usr/src/kodekloudrepos/cluster repo from master and copy the /tmp/index.html file (present on storage server itself) into the repo. Further, add/commit this file in the new branch and merge back that branch into master branch. Finally, push the changes to the origin for both of the branches.

Check Existing Branches

1

git branch

Only the master branch exists, and it is currently checked out.

Create a New Branch

1

git branch nautilus

A new branch named nautilus is created from master.

Switch to the New Branch

1

git checkout nautilus

You are now working on the nautilus branch.

Copy Required File into Repository

1

cp /tmp/index.html .

The file is copied into the repository root.

Verify Files in Repository

1

ls

index.html is now present in the repo.

Stage Changes

1

git add .

All changes (including index.html) are staged.

Commit Changes in Nautilus Branch

1

git commit -m "adding file"

The file is successfully committed to the nautilus branch.

Switch Back to Master Branch

1

git checkout master

You are now back on master.

Merge Nautilus Branch into Master

1

git merge nautilus

The nautilus branch changes are merged into master using a fast-forward merge.

Push Master Branch to Origin

1

git push

Day 26: Git Manage Remotes

The xFusionCorp development team added updates to the project that is maintained under /opt/official.git repo and cloned under /usr/src/kodekloudrepos/official. Recently some changes were made on Git server that is hosted on Storage server in Stratos DC. The DevOps team added some new Git remotes, so we need to update remote on /usr/src/kodekloudrepos/official repository as per details mentioned below: a. In /usr/src/kodekloudrepos/official repo add a new remote dev_official and point it to /opt/xfusioncorp_official.git repository. b. There is a file /tmp/index.html on same server; copy this file to the repo and add/commit to master branch.

c. Finally push master branch to this new remote origin.

Check Existing Git Remotes

1

git remote -v

Add New Remote Repository

1

git remote add dev_official /opt/xfusioncorp_official.git

Verify Remote Was Added

1

git remote -v

Copy File Into Repository

1

cp /tmp/index.html .

Stage Changes

1

git add .

Commit Changes to Master Branch

1

git commit -m "adding file"

Check Current Branch

1

git branch

Push Master Branch to New Remote

1

git push dev_official master

Quick Tip

- Always explicitly specify the branch when pushing to a new remote:

1

git push <remote-name> <branch-name>

Day 27: Git Revert Some Changes

The Nautilus application development team was working on a git repository /usr/src/kodekloudrepos/apps present on Storage server in Stratos DC. However, they reported an issue with the recent commits being pushed to this repo. They have asked the DevOps team to revert repo HEAD to last commit. Below are more details about the task:

In /usr/src/kodekloudrepos/apps git repository, revert the latest commit ( HEAD ) to the previous commit . Use revert apps message (please use all small letters for commit message) for the new revert commit.

Navigate to Repository

1

cd /usr/src/kodekloudrepos/apps

Check Current Commit History

1

git log --oneline

- Shows commit hash, HEAD, and messages. Example output:

1

2

269aa04 (HEAD -> master) add data.txt file

3d81254 initial commit

Revert the Latest Commit

1

git revert HEAD

- Creates a new commit that undoes the changes of the latest commit.

- Safe for shared repositories since it preserves history.

Change Revert Commit Message

If you want a custom message instead of the default Revert "...":

1

git commit --amend -m "revert apps"

- Updates the last commit message.

- Example after amend:

1

2

3

111d333 (HEAD -> master) revert apps

269aa04 add data.txt file

3d81254 initial commit

Verify Revert

1

git log --oneline

- Confirms the latest commit is your revert commit.

1

git status

- Ensures working directory is clean.

Notes / Tips

- Do NOT use

-moption for normal commits; it’s only for merge commits. - Use

git revertinstead ofgit resetif others are working on the repo. - If there are untracked files, Git will show them under

git status, but they do not affect the revert.

Day 28: Git Cherry Pick

The Nautilus application development team has been working on a project repository /opt/games.git. This repo is cloned at /usr/src/kodekloudrepos on storage server in Stratos DC. They recently shared the following requirements with the DevOps team:

There are two branches in this repository, master and feature. One of the developers is working on the feature branch and their work is still in progress, however they want to merge one of the commits from the feature branch to the master branch, the message for the commit that needs to be merged into master is Update info.txt. Accomplish this task for them, also remember to push your changes eventually.

Repository Location

- Remote repo:

/opt/games.git - Local clone:

/usr/src/kodekloudrepos/games

Step 1: Navigate to the Repository

1

cd /usr/src/kodekloudrepos/games

Step 2: Check Available Branches

1

git branch

Step 3: Identify the Required Commit

List commits on the feature branch:

1

git log feature --oneline

Look for the commit message:

1

Update info.txt

Copy the commit hash:

1

d6a24a9ab99c93bc1420434dd6ea28ae997a0763

Step 4: Switch to Master Branch

1

git checkout master

Step 5: Cherry-Pick the Commit

1

git cherry-pick d6a24a9ab99c93bc1420434dd6ea28ae997a0763

Successful output indicates the commit is applied:

1

[master <new-hash>] Update info.txt

Step 6: Verify Repository Status

1

git status

Expected output:

1

2

Your branch is ahead of 'origin/master' by 1 commit

nothing to commit, working tree clean

Step 7: Push Changes to Remote

1

git push origin master

Day 29: Manage Git Pull Requests

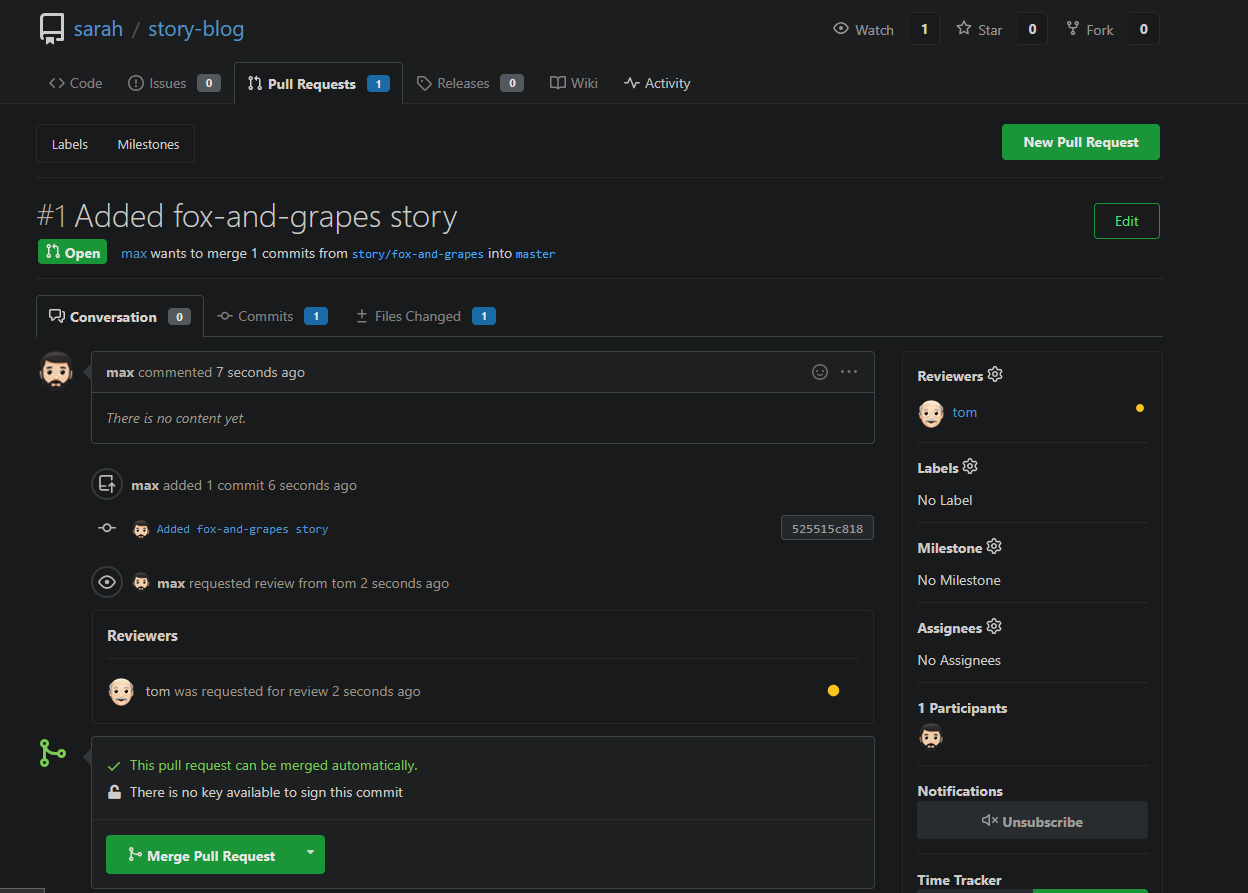

Max want to push some new changes to one of the repositories but we don’t want people to push directly to master branch, since that would be the final version of the code. It should always only have content that has been reviewed and approved. We cannot just allow everyone to directly push to the master branch. So, let’s do it the right way as discussed below:

SSH into storage server using user max, password Max_pass123 . There you can find an already cloned repo under Max user’s home.

Max has written his story about The 🦊 Fox and Grapes 🍇

Max has already pushed his story to remote git repository hosted on Gitea branch story/fox-and-grapes

Check the contents of the cloned repository. Confirm that you can see Sarah’s story and history of commits by running git log and validate author info, commit message etc.

Max has pushed his story, but his story is still not in the master branch. Let’s create a Pull Request(PR) to merge Max’s story/fox-and-grapes branch into the master branch

Click on the Gitea UI button on the top bar. You should be able to access the Gitea page.

UI login info:

Username:

maxPassword:

Max_pass123

PR title : Added fox-and-grapes story

PR pull from branch: story/fox-and-grapes (source)

PR merge into branch: master (destination)

Before we can add our story to the master branch, it has to be reviewed. So, let’s ask tom to review our PR by assigning him as a reviewer

Add tom as reviewer through the Git Portal UI

Go to the newly created PR

Click on Reviewers on the right

Add tom as a reviewer to the PR

Now let’s review and approve the PR as user Tom

Login to the portal with the user tom

Logout of Git Portal UI if logged in as max

UI login info:

Username:

tomPassword:

Tom_pass123

PR title : Added fox-and-grapes story

Review and merge it.

Great stuff!! The story has been merged! 👏

Note: For these kind of scenarios requiring changes to be done in a web UI, please take screenshots so that you can share it with us for review in case your task is marked incomplete. You may also consider using a screen recording software such as loom.com to record and share your work.

Day 30: Git hard reset

The Nautilus application development team was working on a git repository /usr/src/kodekloudrepos/official present on Storage server in Stratos DC. This was just a test repository and one of the developers just pushed a couple of changes for testing, but now they want to clean this repository along with the commit history/work tree, so they want to point back the HEAD and the branch itself to a commit with message add data.txt file. Find below more details:

- In

/usr/src/kodekloudrepos/officialgit repository, reset the git commit history so that there are only two commits in the commit history i.einitial commitandadd data.txt file. - Also make sure to push your changes.

Navigate to repository directory

1

cd /usr/src/kodekloudrepos/official

Check repository status

1

git status

View commit history (one line format)

1

git log --oneline

Reset branch to specific commit (remove newer commits)

This is will remove all the commits till a34829d and move the HEAD to a34829d commit

1

git reset --hard a34829d

Verify commit history after reset

1

git log --oneline

Force push changes to remote repository

1

git push origin master --force

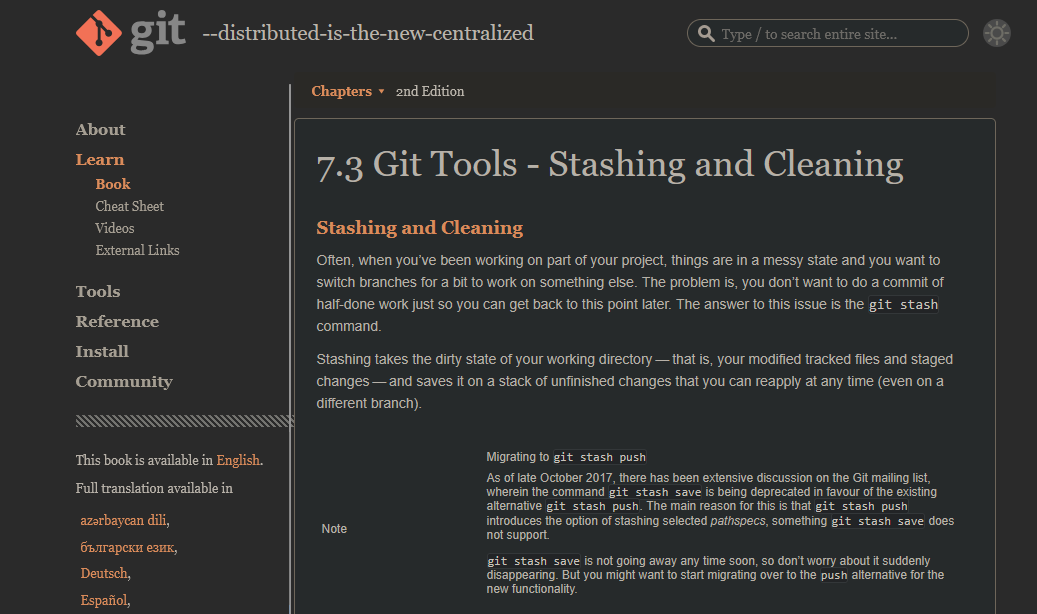

Day 31: Git Stash

The Nautilus application development team was working on a git repository /usr/src/kodekloudrepos/blog present on Storage server in Stratos DC. One of the developers stashed some in-progress changes in this repository, but now they want to restore some of the stashed changes. Find below more details to accomplish this task:

Look for the stashed changes under /usr/src/kodekloudrepos/blog git repository, and restore the stash with stash@{1} identifier. Further, commit and push your changes to the origin.

Git Stash Theory

Check repository status

1

git status

Check current branch

1

git branch

View commit history

1

git log --oneline

List available stashes

1

git stash list

Example output:

1

2

stash@{0}: WIP on master: ba196f3 initial commit

stash@{1}: WIP on master: ba196f3 initial commit

Apply a specific stash (stash@{1})

1

git stash apply stash@{1}

Verify restored changes

1

git status

Stage all changes

1

git add .

Commit restored stash changes

1

git commit -m "added welcome.txt from stash@{1}"

Push changes to remote (correct branch)

1

git push origin master

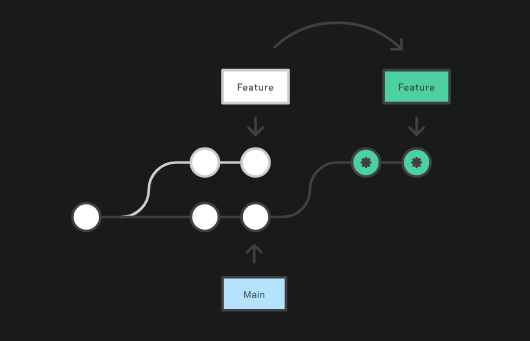

Day 32: Git Rebase

The Nautilus application development team has been working on a project repository /opt/beta.git. This repo is cloned at /usr/src/kodekloudrepos on storage server in Stratos DC. They recently shared the following requirements with DevOps team:

One of the developers is working on feature branch and their work is still in progress, however there are some changes which have been pushed into the master branch, the developer now wants to rebase the feature branch with the master branch without loosing any data from the feature branch, also they don’t want to add any merge commit by simply merging the master branch into the feature branch. Accomplish this task as per requirements mentioned.

Also remember to push your changes once done.

Check repository status

1

git status

View commit history

1

git log --oneline

List all branches

1

git branch

Switch to master branch

1

git checkout master

Fetch latest changes from remote

1

git fetch origin

Switch to feature branch

1

git checkout feature

Rebase feature branch onto master

1

git rebase origin/master

Resolve conflicts (if any)

1

2

git add .

git rebase --continue

Push rebased feature branch to remote (history rewritten)

1

git push origin feature --force

View commit graph for verification

1

git log --oneline --graph --decorate --all

Day 33: Resolve Git Merge Conflicts

Sarah and Max were working on writting some stories which they have pushed to the repository. Max has recently added some new changes and is trying to push them to the repository but he is facing some issues. Below you can find more details:

SSH into storage server using user max and password Max_pass123. Under /home/max you will find the story-blog repository. Try to push the changes to the origin repo and fix the issues. The story-index.txt must have titles for all 4 stories. Additionally, there is a typo in The Lion and the Mooose line where Mooose should be Mouse.

Click on the Gitea UI button on the top bar. You should be able to access the Gitea page. You can login to Gitea server from UI using username sarah and password Sarah_pass123 or username max and password Max_pass123.

Note: For these kind of scenarios requiring changes to be done in a web UI, please take screenshots so that you can share it with us for review in case your task is marked incomplete. You may also consider using a screen recording software such as loom.com to record and share your work.

1. Repository Access

- Logged into the storage server as user

max - Navigated to:

/home/max/story-blog

2. Pulled Latest Changes

1

git pull origin master

3. Fixed Issues

Corrected Typo

Updated the story file:

The Lion and the Mooose → The Lion and the Mouse

Remove the merge conflicts as well!

4. Configured Git Identity

1

2

git config user.name max

git config user.email max@ststor01.stratos.xfusioncorp.com

5. Committed Changes

1

2

git add .

git commit -m "fix: change spelling moose to mouse"

6. Pushed to Remote Repository

1

git push origin master

Git Log Verification (Logs Answer)

Output of git log after successful operations:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

commit b8b703b0b4359617e43a1183c547f7308affe825

Merge: 0d395c7 75817c8

Author: Linux User <max@ststor01.stratos.xfusioncorp.com>

Date: Fri Jan 16 10:07:56 2026 +0000

fix: change spelling moose to mouse

commit 0d395c7d2a03d571e49bae826788e33642ad613f

Author: Linux User <max@ststor01.stratos.xfusioncorp.com>

Date: Fri Jan 16 10:02:29 2026 +0000

fix: change spelling moose to mouse

commit 909511f4f2c0ad20cfcc4db3e021eb071e7a30aa

Author: Linux User <max@ststor01.stratos.xfusioncorp.com>

Date: Fri Jan 16 09:57:43 2026 +0000

Added the fox and grapes story

commit 75817c899d762226d3481a1c68a9fdaafda9c77f

Author: sarah <sarah@stratos.xfusioncorp.com>

Date: Fri Jan 16 09:57:42 2026 +0000

Added Index

commit a15185df863df6826ec9e1009e8c4fdcd9605738

Merge: 8a9a036 bb0aee5

Author: sarah <sarah@stratos.xfusioncorp.com>

Date: Fri Jan 16 09:57:41 2026 +0000

Merge branch 'story/frogs-and-ox'

commit 8a9a03604b24f08560601b4f7d83013ff8e87689

Author: sarah <sarah@stratos.xfusioncorp.com>

Date: Fri Jan 16 09:57:41 2026 +0000

Fix typo in story title

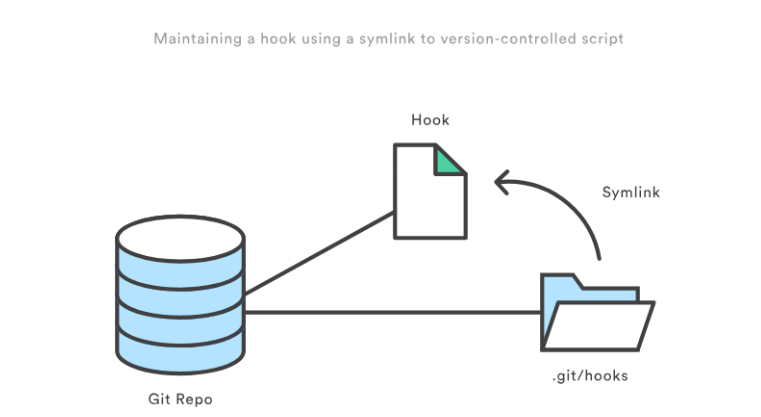

Day 34: Git Hook

The Nautilus application development team was working on a git repository /opt/news.git which is cloned under /usr/src/kodekloudrepos directory present on Storage server in Stratos DC. The team want to setup a hook on this repository, please find below more details:

- Merge the

featurebranch into themasterbranch, but before pushing your changes complete below point. - Create a

post-updatehook in this git repository so that whenever any changes are pushed to themasterbranch, it creates a release tag with namerelease-2023-06-15, where2023-06-15is supposed to be the current date. For example if today is20th June, 2023then the release tag must berelease-2023-06-20. Make sure you test the hook at least once and create a release tag for today’s release. - Finally remember to push your changes.

Note:Perform this task using thenatashauser, and ensure the repository or existing directory permissions are not altered.

1. Navigate to working repository

1

cd /usr/src/kodekloudrepos/news

Checked repository status and branches:

1

2

git status

git branch

2. Merge feature branch into master

1

2

git checkout master

git merge feature

3. Create post-update hook in bare repository

Navigate to hooks directory:

1

2

cd /opt/news.git/hooks

ls

Create hook file:

1

vi post-update

Hook content:

1

2

3

4

5

6

7

8

9

#!/bin/bash

refname="$1"

if [[ "$refname" == "refs/heads/master" ]]; then

DATE=$(date +%Y-%m-%d)

TAG="release-$DATE"

git tag -f "$TAG"

fi

Make executable:

1

chmod +x post-update

4. Push changes to trigger hook

1

2

cd /usr/src/kodekloudrepos/news

git push origin master

Push output:

1

2

To /opt/news.git

38f6832..279d796 master -> master

5. Verify release tag creation

1

2

cd /opt/news.git

git tag

Result:

1

release-2026-01-16

(The date reflects the current system date.)

Day 35: Install Docker Packages and Start Docker Service

The Nautilus DevOps team aims to containerize various applications following a recent meeting with the application development team. They intend to conduct testing with the following steps:

- Install

docker-ceanddocker composepackages onApp Server 1. - Initiate the

dockerservice.

System Information

The operating system details were verified using:

1

cat /etc/os-release

1. Install required dependencies

1

sudo yum install -y yum-utils device-mapper-persistent-data lvm2

2. Add Docker official repository

1

sudo yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo

3. Install Docker CE and Docker Compose packages

1

sudo yum install docker-ce docker-ce-cli containerd.io docker-buildx-plugin docker-compose-plugin -y

Installed components:

- docker-ce

- docker-ce-cli

- containerd.io

- docker-buildx-plugin

- docker-compose-plugin

4. Enable and start Docker service

1

sudo systemctl enable --now docker

5. Verify Docker installation

1

sudo docker run hello-world

Day 36: Deploy Nginx Container on Application Server

The Nautilus DevOps team is conducting application deployment tests on selected application servers. They require a nginx container deployment on Application Server 3. Complete the task with the following instructions:

- On

Application Server 3create a container namednginx_3using thenginximage with thealpinetag. Ensure container is in arunningstate.

Steps Performed

Ran the Docker container with the required name and image:

1

docker run -d --name nginx_3 nginx:alpine

Verified the container status:

1

docker ps

Day 37: Copy File to Docker Container

The Nautilus DevOps team possesses confidential data on App Server 3 in the Stratos Datacenter. A container named ubuntu_latest is running on the same server.

Copy an encrypted file /tmp/nautilus.txt.gpg from the docker host to the ubuntu_latest container located at /opt/. Ensure the file is not modified during this operation.

Steps Performed

Checking Docker Name

1

docker ps

Copy the encrypted file from the host to the container:

1

docker cp /tmp/nautilus.txt.gpg ubuntu_latest:/opt/Access the container to verify the file:

1

docker exec -it ubuntu_latest bash

Navigate to the destination directory and confirm the file exists:

1 2

cd /opt ls

Day 38: Pull Docker Image

Nautilus project developers are planning to start testing on a new project. As per their meeting with the DevOps team, they want to test containerized environment application features. As per details shared with DevOps team, we need to accomplish the following task: a. Pull busybox:musl image on App Server 2 in Stratos DC and re-tag (create new tag) this image as busybox:blog.

Steps Performed

- Pulled the BusyBox image with the

musltag:

1

docker pull busybox:musl

- Created a new tag named

busybox:blogfrom the pulled image:

1

docker tag busybox:musl busybox:blog

- Verified the images:

1

docker images | grep busybox

Day 39: Create a Docker Image From Container

One of the Nautilus developer was working to test new changes on a container. He wants to keep a backup of his changes to the container. A new request has been raised for the DevOps team to create a new image from this container. Below are more details about it: a. Create an image beta:devops on Application Server 2 from a container ubuntu_latest that is running on same server.

Steps Performed

- Verified that the container is running:

1

docker ps | grep ubuntu_latest

- Created a new image from the running container:

1

docker commit ubuntu_latest beta:devops

- Verified the new image:

1

docker images | grep beta

Day 40: Docker EXEC Operations

One of the Nautilus DevOps team members was working to configure services on a kkloud container that is running on App Server 3 in Stratos Datacenter. Due to some personal work he is on PTO for the rest of the week, but we need to finish his pending work ASAP. Please complete the remaining work as per details given below:

a. Install apache2 in kkloud container using apt that is running on App Server 3 in Stratos Datacenter.

b. Configure Apache to listen on port 6200 instead of default http port. Do not bind it to listen on specific IP or hostname only, i.e it should listen on localhost, 127.0.0.1, container ip, etc.

c. Make sure Apache service is up and running inside the container. Keep the container in running state at the end.

Steps Performed

- Accessed the running container:

1

docker exec -it kkloud bash

- Updated the package index and installed Apache2:

1

apt install -y apache2

- Configured Apache to listen on port 6200:

1

sed -i 's/Listen 80/Listen 6200/' /etc/apache2/ports.conf

- Vim is not downloaded

- Restarted Apache service to apply changes:

1

service apache2 restart

- Verified that Apache is running and listening on the new port:

1

service apache2 status

- Exited the container, keeping it in running state:

1

2

exit

docker ps

Day 41: Write a Docker File

As per recent requirements shared by the Nautilus application development team, they need custom images created for one of their projects. Several of the initial testing requirements are already been shared with DevOps team. Therefore, create a docker file /opt/docker/Dockerfile (please keep D capital of Dockerfile) on App server 1 in Stratos DC and configure to build an image with the following requirements:

a. Use ubuntu:24.04 as the base image.

b. Install apache2 and configure it to work on 3003 port. (do not update any other Apache configuration settings like document root etc).

Steps Performed

- Go to the directory

/opt/docker/

1

vi Dockerfile

Paste the objects in the Dockerfile

1

2

3

4

5

6

7

8

9

10

11

12

13

FROM ubuntu:24.04

ENV DEBIAN_FRONTEND=noninteractive

RUN apt-get update && apt-get install -y apache2 && apt-get clean

RUN sed -i 's/Listen 80/Listen 3003/' /etc/apache2/ports.conf

RUN sed -i 's/:80/:3003/' /etc/apache2/sites-available/000-default.conf

EXPOSE 3003

CMD ["apachectl", "-D", "FOREGROUND"]

Run the Command in the /opt/docker/ folder

1

docker build -t nautilus-app-image:latest .

Day 42: Create a Docker Network

The Nautilus DevOps team needs to set up several docker environments for different applications. One of the team members has been assigned a ticket where he has been asked to create some docker networks to be used later. Complete the task based on the following ticket description:

a. Create a docker network named as media on App Server 3 in Stratos DC.

b. Configure it to use bridge drivers.

c. Set it to use subnet 10.10.1.0/24 and iprange 10.10.1.0/24.

Steps Performed

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

[root@stapp03 banner]# docker network create --driver bridge --subnet 10.10.1.0/24 --ip-range 10.10.1.0/24 media

1a0b4591d6d61da07c3dfgdf3454356732ff63d540bcb27fghtryt756765ea

[root@stapp03 banner]# docker network

Usage: docker network COMMAND

Manage networks

Commands:

connect Connect a container to a network

create Create a network

disconnect Disconnect a container from a network

inspect Display detailed information on one or more networks

ls List networks

prune Remove all unused networks

rm Remove one or more networks

Run 'docker network COMMAND --help' for more information on a command.

[root@stapp03 banner]# docker network ls

NETWORK ID NAME DRIVER SCOPE

0c0f46f7bc87 bridge bridge local

33d05cba4099 host host local

1a0b4591d6d6 media bridge local

6f6dc1599b7e none null local

I find docker documentation is the best guide for docker network

Day 43: Docker Ports Mapping

The Nautilus DevOps team is planning to host an application on a nginx-based container. There are number of tickets already been created for similar tasks. One of the tickets has been assigned to set up a nginx container on Application Server 3 in Stratos Datacenter. Please perform the task as per details mentioned below:

a. Pull nginx:stable docker image on Application Server 3.

b. Create a container named demo using the image you pulled.

c. Map host port 6300 to container port 80. Please keep the container in running state.

Step 1: Pull the NGINX Stable Image

1

docker pull nginx:stable

The nginx:stable image was pulled from Docker Hub to ensure a reliable and production-ready version of NGINX is available locally on the server.

Step 2: Create and Run the Container

1

docker run -d --name demo -p 6300:80 nginx:stable

A container named demo was created and started in detached mode using the pulled image. The host port 6300 was mapped to container port 80, allowing external access to the NGINX web service.

Step 3: Verify Container Status

1

docker ps

This command was used to confirm that the demo container is running successfully and that the port mapping is active.

1

2

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

f738f0094168 nginx:stable "/docker-entrypoint.…" 3 seconds ago Up 1 second 0.0.0.0:6300->80/tcp demo

Step 4: Validate NGINX Service Accessibility

1

curl http://localhost:6300

The command returned the default NGINX welcome page, verifying that the web server is running correctly and accessible through the mapped port.

Day 44: Write a Docker Compose File

The Nautilus application development team shared static website content that needs to be hosted on the httpd web server using a containerised platform. The team has shared details with the DevOps team, and we need to set up an environment according to those guidelines. Below are the details:

a. On App Server 1 in Stratos DC create a container named httpd using a docker compose file /opt/docker/docker-compose.yml (please use the exact name for file).

b. Use httpd (preferably latest tag) image for container and make sure container is named as httpd; you can use any name for service.

c. Map 80 number port of container with port 8085 of docker host.

d. Map container’s /usr/local/apache2/htdocs volume with /opt/security volume of docker host which is already there. (please do not modify any data within these locations).

Docker Compose Configuration

The following Docker Compose file was created at the exact required path:

/opt/docker/docker-compose.yml

1

2

3

4

5

6

7

8

9

10

version: "3.8"

services:

web:

image: httpd:latest

container_name: httpd

ports:

- "8085:80"

volumes:

- /opt/security:/usr/local/apache2/htdocs

Deployment Steps

Navigated to the Docker directory:

1

cd /opt/docker/Created and saved the

docker-compose.ymlfile with the configuration shown above.Started the container in detached mode:

1

docker compose up -dVerified that the container is running:

1

docker ps

Verification

The web service was tested using curl:

1

curl http://172.16.238.10:8085

The response confirmed that the static content from /opt/security is being served correctly:

1

2

3

4

5

6

7

8

9

10

<!DOCTYPE HTML PUBLIC "-//W3C//DTD HTML 4.01//EN" "http://www.w3.org/TR/html4/strict.dtd">

<html>

<head>

<title>Index of /</title>

</head>

<body>

<h1>Index of /</h1>

<ul><li><a href="index1.html"> index1.html</a></li>

</ul>

</body></html>

Day 45: Resolve Dockerfile Issues

The Nautilus DevOps team is working to create new images per requirements shared by the development team. One of the team members is working to create a Dockerfile on App Server 2 in Stratos DC. While working on it she ran into issues in which the docker build is failing and displaying errors. Look into the issue and fix it to build an image as per details mentioned below: a. The Dockerfile is placed on App Server 2 under /opt/docker directory.

b. Fix the issues with this file and make sure it is able to build the image.

c. Do not change base image, any other valid configuration within Dockerfile, or any of the data been used — for example, index.html.

Note: Please note that once you click on FINISH button all the existing containers will be destroyed and new image will be built from your Dockerfile.

Problem found

1

/usr/local/apache2/conf.d/httpd.conf

- This is not the correct Path

- The correct path is

/usr/local/apache2/conf/httpd.conf

Fixed Dockerfile

1

2

3

4

5

6

7

8

9

10

11

12

13

14

FROM httpd:2.4.43

RUN sed -i "s/Listen 80/Listen 8080/g" /usr/local/apache2/conf/httpd.conf

RUN sed -i '/LoadModule\ ssl_module modules\/mod_ssl.so/s/^#//g' /usr/local/apache2/conf/httpd.conf

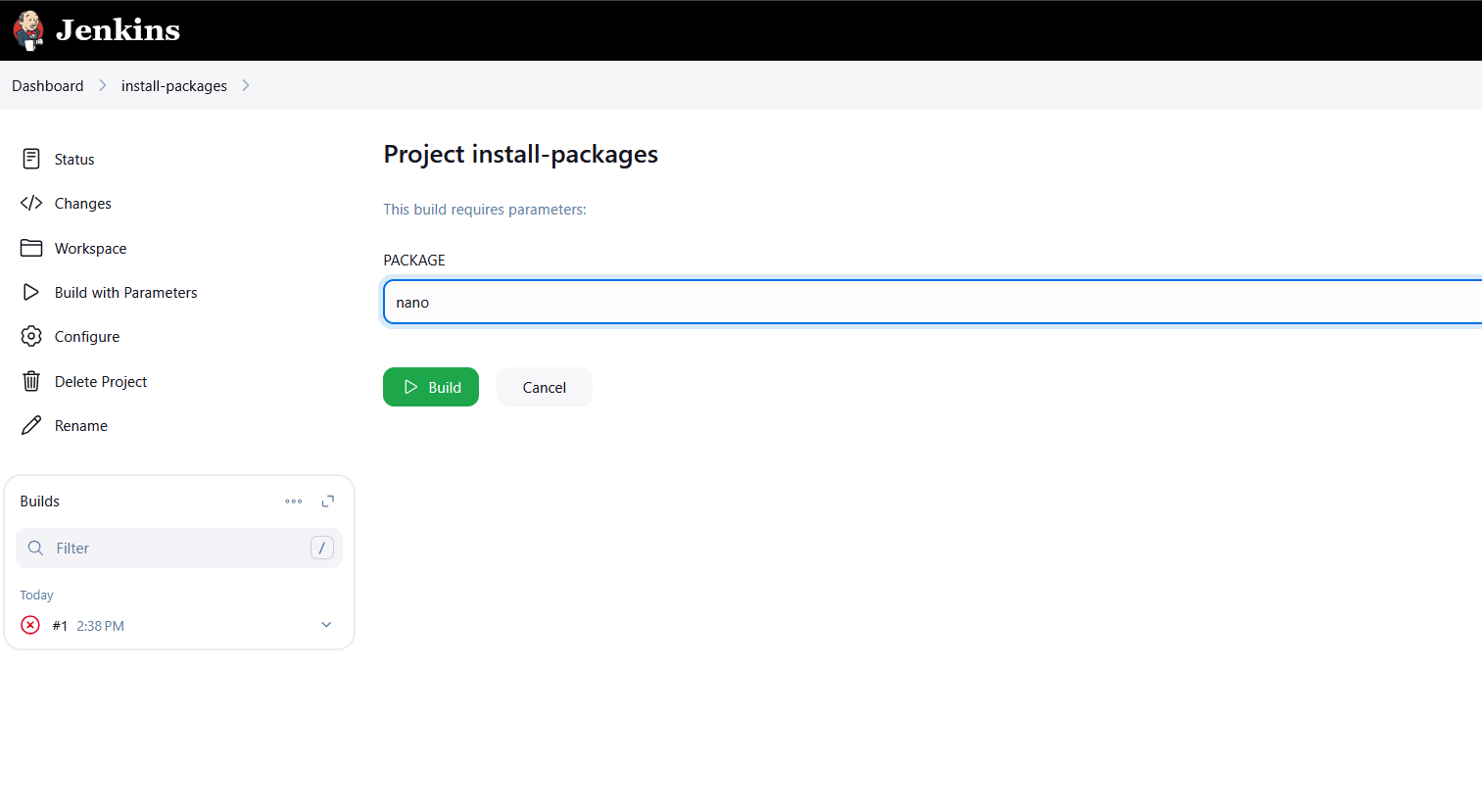

RUN sed -i '/LoadModule\ socache_shmcb_module modules\/mod_socache_shmcb.so/s/^#//g' /usr/local/apache2/conf/httpd.conf